Emerging technologies often exacerbate already existing risks for marginalised communities, especially when governments or companies use them to increase their control. A strong civic voice is therefore needed to advocate for safeguards that consider the perspectives and needs of the at-risk groups. This is essential for building just, inclusive societies, where digital tools work for everyone, not just the powerful.

Can Artificial Intelligence (AI) tools and platforms can really make engagement in policymaking more inclusive and impactful, or do they cause more harm to already excluded voices? Based on our research and discussions with European Union (EU) institutions, platform developers and organisations, we mapped the potential benefits and risks of these technologies and put forward recommendations on how platform operators can ensure due diligence when using AI systems to enhance public participation.

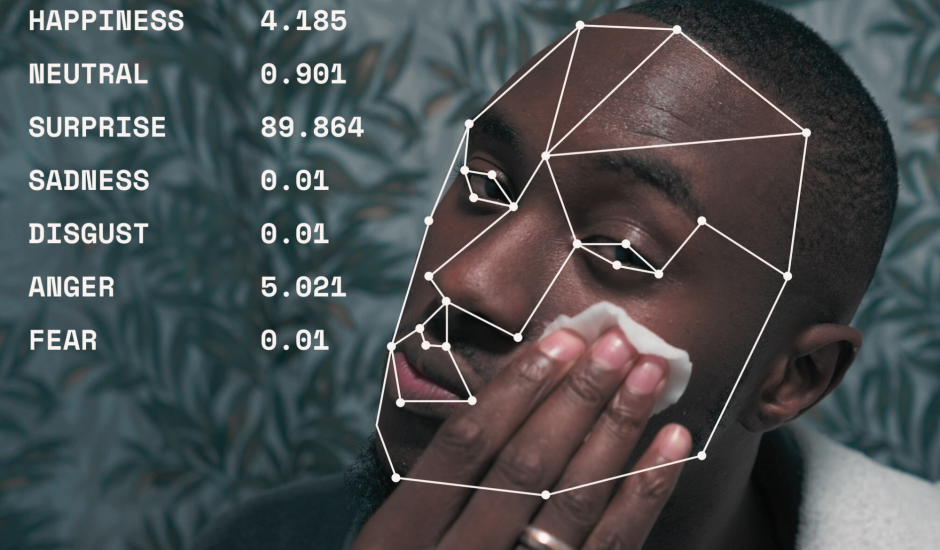

In recent years, we have seen a surge in algorithmic-driven biometric surveillance, even though these systems are fundamentally incompatible with democracy and human rights. Feedback from our partners highlighted a pressing need to better understand the range of tactics and strategies civil society groups can use to push back against such technologies. To support their action, the report showcases successful campaigns against biometric surveillance from across Europe, the United States or Latin America. Our aim is for our research to serve as an initial blueprint for CSOs worldwide to act against biometric surveillance in their communities.

Thanks to these actions:

- Civil society is better equipped with strategies to challenge emerging technologies.

- ECNL has shaped AI policy debates to advance safeguards for inclusive participation.