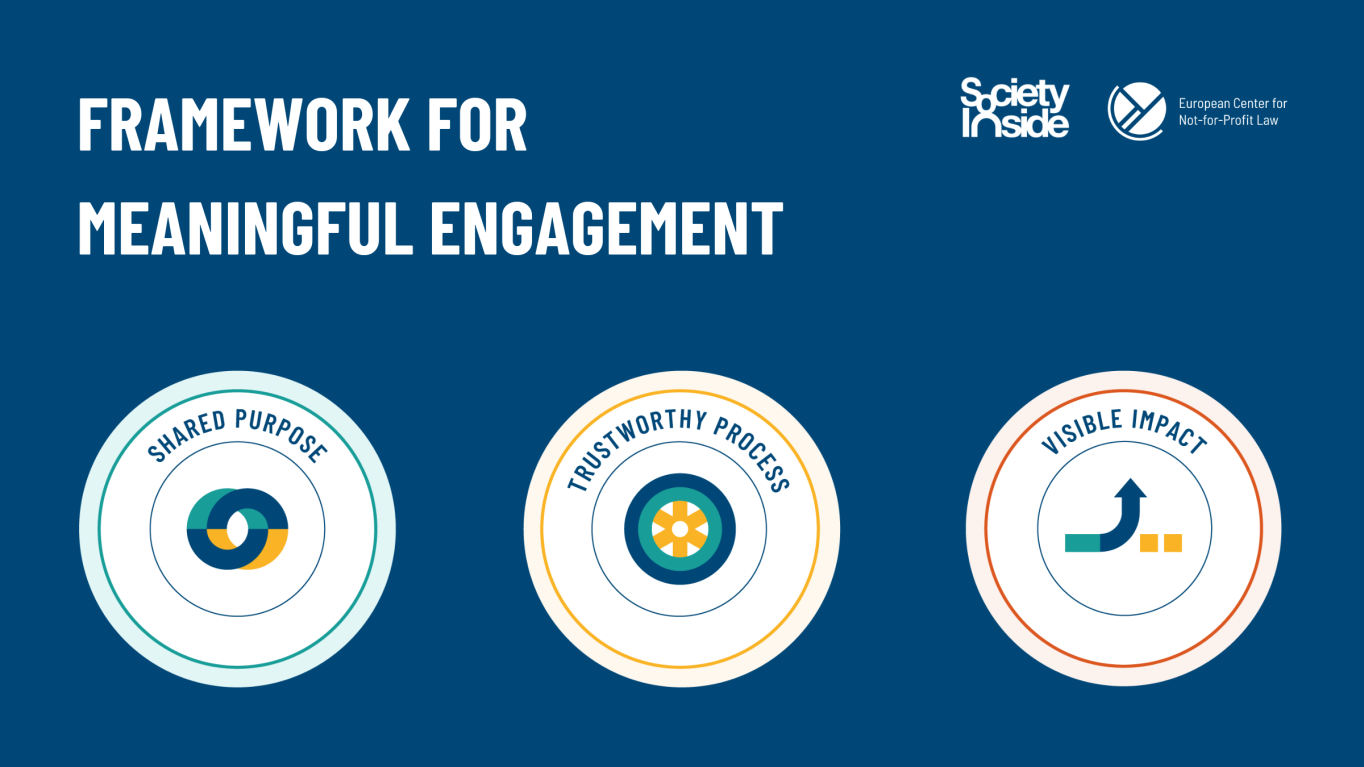

As the use of artificial intelligence (AI) increases, so has the push for human rights impact assessments of the developing technology. ECNL and SocietyInside, with cross-sector input from the Action Coalition on Civic Engagement in AI Design, amongst others, have developed a Framework for meaningfully engaging people during these impact assessments.

The Framework was born out of the need for a clear process, helpful tools and standards setting, in order for the engagement to be meaningful in practice. The aim is that by using this Framework, both developers and those engaged, feel that they have collaboratively created concrete results. Human rights impact assessments need to include diverse voices, disciplines, and lived experiences from a variety of external stakeholders, and within the Framework there is an emphasis on engaging those most at risk from the harms of AI.

In 2023 we continue to pilot the practical implementation and usefulness of the Framework with the City of Amsterdam, as a public body developing AI for its citizens, and with an AI-driven social media platform.

In addition, we will continue consulting civil society and other stakeholders on the content and future iterations of the Framework as a living document that evolves in parallel with the practical needs. If you are interested in providing additional input, ideas, or suggestions for a pilot implementation, please reach out to us at [email protected], on Twitter or LinkedIn or Mastodon.